Convergent and Discriminant Validity

I've seen several examples lately where there was almost no thought given to the validity of methods in research projects. This post looks at the question from a non-technical perspective.

A common pattern for research results in industry is, "We did X, and the answer was Y, and this is useful because Z." What's missing from this is whether those connections from X to Y to Z are valid. Are there good reasons to believe that X appropriately measured Y? And that Y correctly implies Z?

One example I've recently discussed concerns Kano Analysis. I've argued that Kano items do not assess the constructs they claim (thus, in my schema above, X does not say Y), and the Kano results do not imply the decisions that stakeholders want to make (in the schema, Y does not imply Z). I won't repeat the argument but note it as an example.

Another example involves claims I've seen (such as here) that LLM chatbots demonstrate human-like "IQ" at some high level. On the contrary, IQ tests don't give valid answers when applied to computers (X does not say Y), and the results don't say anything about intelligence relative to humans (Y does not imply Z). Again, this post is not about those details; it's just an example.

Many factors contribute to such research errors, including motivated reasoning, magical thinking, ignorance, and naivete. Here I'll focus on a partial antidote: attention to convergent and discriminant validity.

Convergent Validity

Convergent validity is whether different, concurrent assessments of a concept give similar answers. Let's consider a possible survey item for a hypothetical smartphone feature (we'll assume that the "SuperThunder" feature has been explained):

For your next smartphone, would you like a SuperThunder charging cable?

🔲 YES 🔲 NO

Suppose 80% answer yes. The product team will be excited. "80% of users want SuperThunder!"

What's wrong? There are many other reasons to answer "yes" besides wanting the feature. Among those are scale and acquiescence bias (answering yes because it's "nice" or gratifying), motivated responding (they want to qualify for the survey or some other benefit), position bias (perhaps it's easier to answer yes), and that there is no cost in endorsing a feature "just in case" they want it later (no threshold or tradeoff in responding).

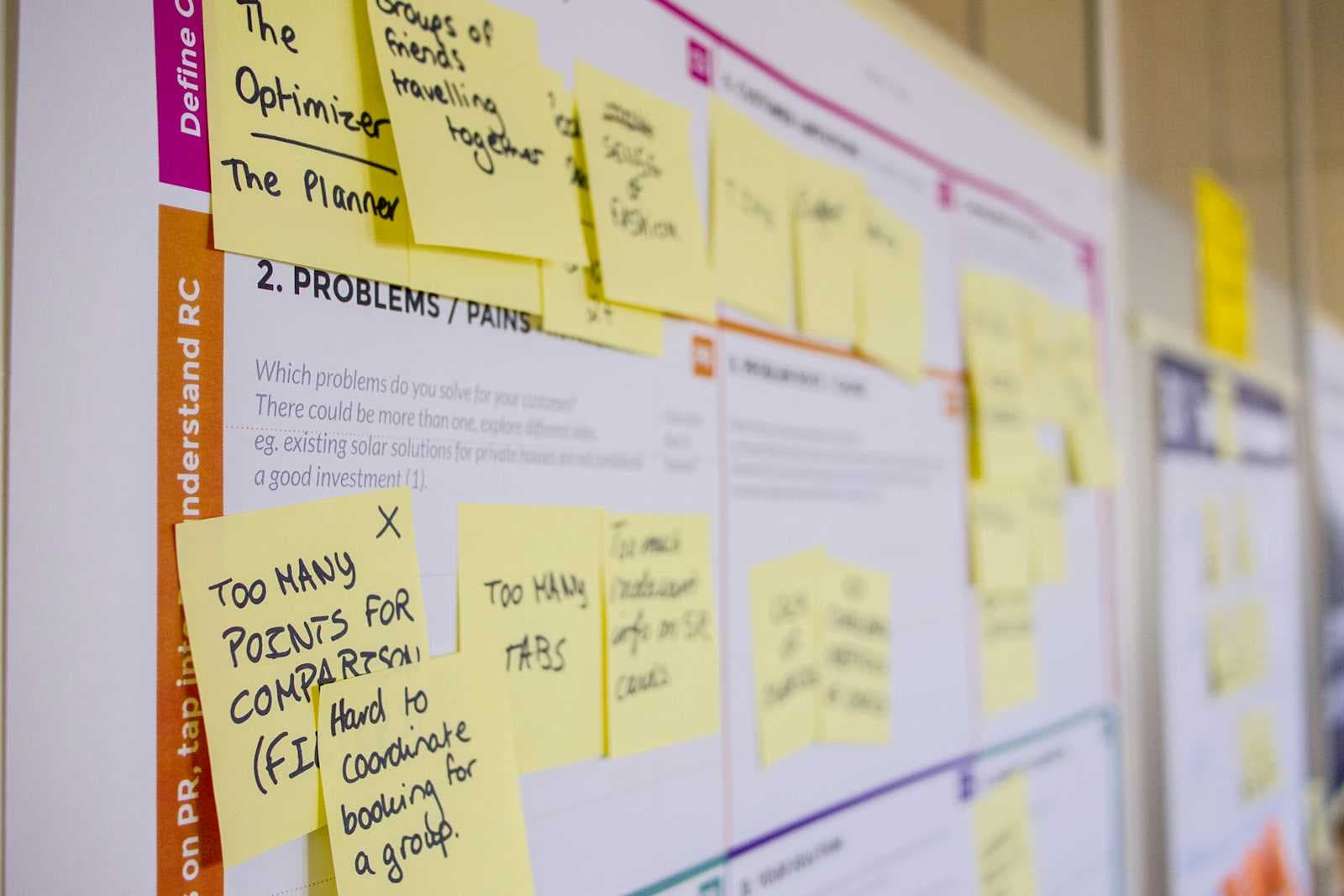

Convergent validity demonstrates that other methods of assessment agree with a claim. For example, we might assess how often users would pay for SuperThunder when choosing a product (using conjoint analysis); that SuperThunder ranks highly compared to other features (using MaxDiff); that it would solve an important use case (perhaps using qualitative research); or that users actively use it in prototypes (pre-release A/B testing).

In short, if we measure something using multiple methods and they agree, we are establishing convergent validity. But that's not enough ...

Discriminant Validity

Discriminant validity is whether some measurement differs appropriately from other, unrelated things. Suppose our smartphone survey assesses 4 features and finds:

SuperThunder: 80% want it, with $8 willingness to pay (WTP)

BigCloud: 82% want it, with $12 WTP

MegaCamera: 79% want it, with $14 WTP

OrangutanGlass: 84% want it, with $11 WTP

Stakeholders will love those results! "Users want all of our features AND they are willing to pay for them!" We went way beyond most research by using two methods and demonstrating convergent validity, right?

But did we? Another possibility is that the respondents went along with everything. Perhaps they believe that positive answers will qualify them for future projects, or they just want to be nice. Discriminant analysis would show that the results for SuperThunder are appropriately different from the results for something else or using a different method.

For example, suppose we found:

SuperThunder: 80% stated preference, WTP +$8; with 90% continuing to use it in pilot; 43% say it would help with photos; 13% it would help do dishes.

MiniThunder: 32% stated preference, WTP -$10; with 80% continuing to use it in pilot; 38% say it would help with photos; 18% it would help do dishes.

These results would show convergent validity for the stated preference item because it agrees with assessments via willingness to pay and pilot usage. SuperThunder has convergent support vs MiniThunder because the stated preference and WTP agree in direction, and they are both higher than those of MiniThunder. It also has convergent support on its own with its pilot retention rate of 90%. That is congruent with the preference even if it is not so different from the retention rate for MiniThunder (maybe users were stuck with it even if they disliked it).

But as we've seen, that's not enough. These results add discriminant validity because they show differences in preference for areas that should be unrelated to the SuperThunder charging cable. The endorsements are substantially lower for saying it would help with photos and doing dishes (yes, that's a joke. Sort of.)

Those results demonstrate that the support for SuperThunder is not simply due to response style or the overall "goodness" of everything. There is an appropriate pattern of both agreement and disagreement in ways that make sense.

Would that prove that SuperThunder will be valuable? No, there are other parts to that, such as analysis of the unmet needs that it addresses. Still, by giving attention to convergent and discriminant validity we arrive at a much better and more convincing answer than naively reporting stated preference items.

Final notes

Astute readers will notice that I'm conflating psychometric questions of validity (i.e., that multiple assessments have a particular pattern of agreement) with the related but conceptually quite different question of the strength of evidence for a business case (i.e., that multiple indicators combine to strengthen the evidence for some decision).

I agree that they're conflated here. This post is an introduction and illustration; you can find depth and details in the references below. Yet I'd also say that in applied, industry research there should be a close association between the validity of assessments and logical inferences drawn from the assessments.

On the other side of the discussion, I don't want to suggest that all research is invalid, that research must be perfect, or that it is impossible to make decisions with uncertain or imperfect data. Rather, I believe that too much industry research is naively simple and is too often content when it finds an answer that someone likes. We can do much better without massive effort or expecting perfection.

To learn more in both breadth and depth on validity, I recommend:

Any standard reference on psychometrics, such as RM Furr (2021), Psychometrics: An Introduction. Sage Publications.

Chapters 4 and 5 (UX Research; Statistics) in the Quant UX Book, where Kerry Rodden and I discuss the foundations of solid research and statistical measurement.

Best wishes! I hope this post will inspire you to consider the ways that results may be due to factors other than those that stakeholders assume.